A Policy Perspective on Sovereignty, Architecture, and the Future of Trust

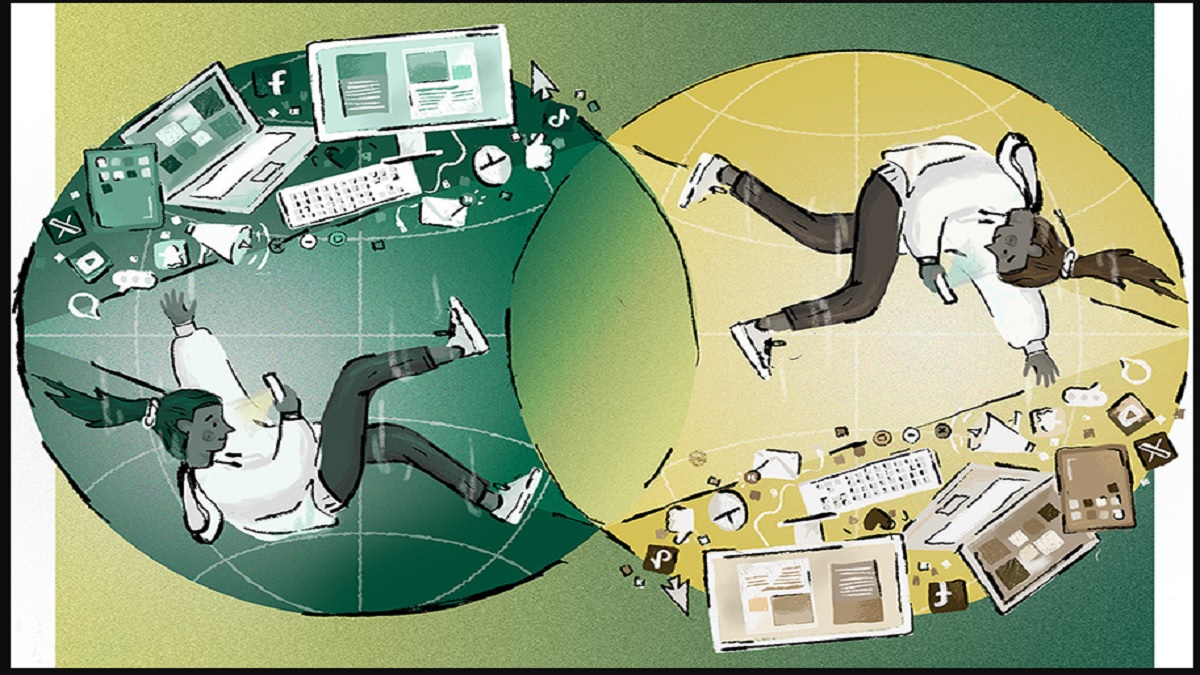

For more than two decades, the global digital ecosystem has been shaped largely by a small cluster of technology companies operating under economic models built on behavioral data extraction. These systems were not inherently malicious; they emerged from the intersection of advertising economics, network effects, and engineering convenience. Yet over time, the consequences of this model have become increasingly visible. Privacy breaches, algorithmic manipulation concerns, and the accumulation of unprecedented volumes of personal data have generated growing discomfort among policymakers, regulators, and citizens alike.

Europe responded earlier than most regions. The General Data Protection Regulation, widely known as GDPR, was not merely a legal instrument but a philosophical declaration. It attempted to redefine data not as a commodity owned by corporations, but as an extension of individual identity deserving protection. Yet even with GDPR in force, structural tensions remain unresolved. Regulatory frameworks can impose obligations, but they cannot fully compensate for underlying technological architectures that were never designed with privacy as a foundational principle.

It is within this broader context that emerging platform architectures from outside traditional Western innovation centers are attracting attention. One such development is the South Asian platform ZKTOR, introduced publicly in Ranchi in early February 2026. While still at an early stage of adoption, its conceptual architecture intersects with many debates currently unfolding within European regulatory circles.

At the centre of interest is the platform’s emphasis on Zero Knowledge Architecture combined with multi-layer encryption and a No-URL media structure. These features suggest a shift from policy-based privacy assurances toward architecture-based privacy enforcement. The distinction is critical. Policies can change. Architectures are harder to circumvent.

From a European policy perspective, the notion that a platform could operate without retaining readable user data is particularly compelling. Traditional platforms often collect extensive metadata even when content itself is encrypted. Metadata, however, can reveal behavioral patterns, social networks, and personal characteristics. Systems designed to minimize or eliminate such collection alter risk profiles significantly.

The No-URL approach introduces another layer of relevance. In conventional web architectures, media content is associated with accessible links that facilitate sharing but also enable extraction. Removing publicly accessible pathways could reduce large-scale scraping risks. While no system can guarantee absolute protection, structural limitations on extraction create meaningful barriers against abuse, particularly in the context of synthetic media manipulation. For European observers concerned about online harms, especially those affecting women and minors, this aspect carries notable importance. Deep-fake technologies have expanded the scope of digital exploitation. When images or videos can be downloaded easily, they can be manipulated and redistributed without consent. Platforms designed to limit media portability may therefore contribute to harm reduction strategies.

Another dimension attracting attention relates to data sovereignty. European policy debates increasingly emphasize the need for data localization or controlled cross-border transfers. However, geopolitical realities complicate these objectives. Cloud infrastructures often span multiple jurisdictions, and corporate ownership structures may involve foreign entities. A platform architecture capable of automatically segmenting data by country while maintaining operational coherence could align more closely with evolving sovereignty requirements.

Reports indicate that ZKTOR’s system is designed so that national data remains separated within dedicated server environments, protected through layered encryption and distributed storage fragments. If technically validated, such a model could enable compliance with diverse national regulations simultaneously. This is particularly relevant in Europe, where member states share regulatory frameworks yet retain national oversight responsibilities.

The long-term significance becomes even more apparent when considering geopolitical uncertainty. The European Union currently comprises multiple member states with harmonized data regulations, but political developments such as Brix it demonstrate that alliances can evolve. Systems capable of adapting automatically to jurisdictional changes without major architectural redesign would offer resilience over decades.

Financial independence also distinguishes the project. Unlike many technology startups, ZKTOR reportedly declined venture capital funding, including offers from both European and American investors, as well as government innovation grants available in Finland and the European Union. From a European policy standpoint, this decision invites reflection. Venture capital investment accelerates growth but introduces commercial pressures prioritizing monetization and scale. Government funding supports innovation but may create strategic alignment expectations. A privately developed platform maintaining independence from both sources suggests a deliberate attempt to preserve autonomy. Whether this approach proves sustainable remains to be seen, but the strategic rationale is clear.

Leadership background contributes further to European interest. The platform’s architect, Sunil Kumar Singh, spent more than two decades in Finland, a country recognized globally for strong privacy norms, digital governance frameworks, and engineering precision. Nordic countries consistently rank among leaders in trust-based digital ecosystems. The transfer of such design philosophies into a South Asian context represents a rare cross-cultural technological synthesis.

The integration of Finnish technical principles with South Asia’s linguistic diversity and demographic scale introduces both opportunities and challenges. Europe’s population is relatively smaller and more economically homogeneous compared to South Asia. Systems that perform effectively across vastly different socioeconomic environments may offer valuable lessons for global digital governance.

Cyber-security considerations also warrant examination. Modern threat landscapes increasingly target centralized repositories of valuable data. When platforms accumulate massive readable datasets, they become attractive targets for attackers. Distributed encrypted architectures reduce centralized value concentration. By storing data in encrypted fragments across systems, platforms potentially decrease incentives for intrusion. However, architecture alone does not guarantee security. Implementation quality, key management practices, and operational discipline determine real-world outcomes. Policymakers and cyber-security professionals therefore approach such claims with cautious interest rather than immediate endorsement. Independent audits and long-term performance will ultimately determine credibility.

Economic implications extend beyond security. The platform’s hyper local operational model, designed to create employment opportunities in smaller cities and regional communities, intersects with broader European concerns about digital economy inequality. Many technology benefits have historically concentrated in metropolitan hubs. Distributed operational ecosystems could contribute to more balanced development. Similarly, the reported revenue-sharing model allocating a substantial percentage of earnings to content creators raises questions about alternative digital economy structures. While sustainability remains uncertain until large-scale deployment occurs, experimentation with fairer distribution models aligns with growing global discussions about creator rights and platform accountability.

The generational dimension also merits attention. Younger populations across the world increasingly inhabit digital environments as primary social spaces. Safety concerns, misinformation exposure, and algorithmic manipulation risks affect long-term societal resilience. Platforms designed with youth participation and safety considerations embedded into architecture may influence future social dynamics. From a European perspective, perhaps the most intriguing aspect is not any individual feature but the convergence of multiple principles. Privacy by design, sovereignty alignment, safety-oriented media handling, economic fairness, and operational independence rarely coexist within a single platform initiative. The combination suggests a systemic rethinking rather than incremental modification.

Yet caution remains essential. Technology narratives often exceed practical realities during early stages. Adoption challenges, scalability constraints, and unforeseen vulnerabilities can alter trajectories. Europe’s own digital history contains examples of promising innovations that struggled to achieve global impact. Nevertheless, the emergence of alternative architectures from outside established technology centers carries symbolic significance. Innovation ecosystems are becoming more geographically distributed. Regions previously considered technology consumers are increasingly producing original frameworks addressing local needs.

For European regulators and policymakers, monitoring such developments offers valuable insights. Even if platforms like ZKTOR do not achieve global dominance, their architectural experiments may influence industry standards. Established companies often adopt innovations originating from smaller competitors once viability becomes clear.

The global technology landscape is entering a phase where trust may become as important as functionality. Users increasingly evaluate platforms not only based on features but also on perceived ethical alignment and safety assurances. Systems that embed protections structurally rather than relying solely on policies may gain competitive advantages.

The coming years will reveal whether privacy-centric architectures remain niche alternatives or evolve into mainstream expectations. Europe’s regulatory leadership positions it to play a central role in shaping this transition. Observing developments in South Asia may therefore provide early indicators of broader shifts. If the first phase of global digital expansion was defined by scale, speed and monetization efficiency, the next phase will likely be defined by resilience, jurisdictional compatibility and structural trust. From Brussels to Helsinki, from Berlin to Tallinn, regulators increasingly recognize that privacy cannot be sustained through enforcement alone. Enforcement reacts. Architecture prevents.

The emergence of ZKTOR from South Asia therefore intersects directly with a structural dilemma Europe continues to face. Despite GDPR’s global influence, enforcement actions against large platforms have resulted primarily in financial penalties. Those fines, while substantial, do not fundamentally alter underlying business models. They create compliance layers over architectures originally designed for data aggregation. This distinction is not trivial. An architecture built around profiling must continuously adapt to regulatory pressure. An architecture built around non-profiling does not require adaptation in the same way. It begins from a different premise.

For European policymakers, the long-term question is not whether large platforms can be fined, but whether the next generation of platforms can be built differently from inception. That is why projects that emphasize Zero Knowledge Architecture and zero behavior tracking attract analytical attention. These systems claim to eliminate the need for intrusive data collection in the first place. From a governance standpoint, this introduces a paradigm shift. Traditional oversight assumes that companies hold data and must be regulated in how they process it. But what if a company’s architecture prevents readable data retention? Oversight would then focus on verifying structural integrity rather than auditing behavioral logs. The regulatory burden would shift from monitoring use to validating design.

The No-URL media system also raises important governance considerations. Europe’s Digital Services Act addresses platform responsibility for content distribution and systemic risks. However, large-scale scraping and external manipulation of media remain persistent vulnerabilities. Systems that reduce external content extractability may help address one dimension of digital harm, particularly in cases of image-based abuse and synthetic manipulation.

Women’s digital safety is a matter of increasing legislative focus across Europe. Deep-fake technologies have disproportionately targeted women, undermining participation in public digital life. While no platform can eliminate all risks, architecture that limits easy download pathways reduces attack surfaces. From a policy perspective, such structural mitigation aligns with harm prevention principles embedded in European law.

Another area of interest lies in data localization flexibility. European member states operate within a shared regulatory framework, yet national security and sovereignty concerns persist. The ability of a platform to segregate national data automatically while maintaining operational coherence may offer a template for future compliance models.

Consider a scenario where regulatory standards evolve independently across jurisdictions. Platforms built with centralized data architectures face significant restructuring costs to comply with divergent national requirements. Systems designed from the outset to operate as modular national clusters possess greater adaptability. This is particularly relevant given Europe’s experience with regulatory fragmentation prior to harmonization.

The long-term resilience of digital infrastructure increasingly depends on anticipating geopolitical volatility. Brex-it demonstrated how rapidly regulatory boundaries can shift. Future geopolitical changes could produce similar adjustments elsewhere. Platforms prepared to adapt at the architectural level rather than through reactive restructuring may possess significant strategic advantages.

The financial independence dimension also carries implications for European investment philosophy. Venture capital has fuelled much of the technology sector’s expansion, but it has also entrenched monetization pressures. Platforms dependent on investor growth targets often priorities rapid user acquisition and engagement optimization, sometimes at the expense of safety considerations. A model developed without venture capital and without reliance on government grants operates under different incentives. It may grow more slowly, but it retains strategic autonomy. From a European standpoint, where debates around digital strategic autonomy are intensifying, such independence resonates conceptually.

Sunil Kumar Singh’s decision to decline available European innovation grants in Finland further underscores this autonomy. Finland, known for strong public support of technology ventures, offers substantial funding mechanisms. Refusing such support suggests a deliberate choice to maintain operational sovereignty unlinked to governmental policy influence. Whether that model scales globally remains uncertain, but the signal is unmistakable.

The cross-cultural engineering synthesis also deserves deeper examination. Finland’s digital governance culture emphasizes transparency, accountability and user trust. South Asia’s digital ecosystem operates at enormous demographic scale with diverse linguistic and socioeconomic contexts. Integrating Nordic precision with South Asian complexity represents a rare design challenge. If successful, such synthesis could produce architectures capable of functioning across high-trust and low-trust environments simultaneously. This adaptability could prove valuable globally as societies vary widely in institutional trust levels.

Cyber-security professionals within Europe increasingly advocate for distributed security models. Centralized data concentration creates high-value targets for adversaries. Multi-layer encryption combined with distributed data segmentation reduces single-point failure risks. If ZKTOR’s architecture indeed fragments and encrypts data across national clusters, its attack surface may differ significantly from centralized platforms.

However, verification remains essential. Architecture claims must be tested through independent audits and real-world stress scenarios. European cyber-security institutions would likely evaluate such systems through penetration testing, key management reviews and resilience simulations before drawing definitive conclusions.

The hyper-local employment model also intersects with Europe’s digital inclusion concerns. Many European regions struggle with uneven digital opportunity distribution. Platforms that embed operational roles within local communities rather than concentrating control in metropolitan headquarters may contribute to decentralized economic participation.

The reported revenue-sharing mechanism allocating a significant portion of monetization to creators challenges dominant platform economics. Europe has debated fair compensation frameworks for digital creators, yet structural changes remain limited. Experimental models emerging elsewhere may influence future discussions.

Generational dynamics further amplify the relevance. European youth, like their South Asian counterparts, inhabit digital ecosystems deeply integrated into daily life. Concerns about mental health, algorithmic influence and online safety are intensifying. Platforms designed to minimize behavioral manipulation and tracking align with emerging public expectations. Public trust in digital services is increasingly fragile. Surveys across Europe reveal declining confidence in large technology corporations. Trust restoration may require more than policy compliance; it may require demonstrable structural restraint.

In this context, the emergence of platforms prioritizing architecture-based privacy rather than compliance-based privacy contributes to an evolving discourse. Even if such platforms remain regionally concentrated, their existence alters global expectations. Established companies may face pressure to incorporate similar architectural safeguards. The question of global influence cannot be ignored. If platforms built in South Asia achieve sustainable scale while maintaining non-surveillance models, they could challenge assumptions that profitability depends on extensive behavioral tracking. This would carry implications for investment strategies worldwide.

European regulators may find themselves observing not only American technology giants but also alternative ecosystems developing elsewhere. Regulatory frameworks often adapt to market realities. Should privacy-first architectures demonstrate viability at scale, legislative emphasis may shift from restricting data use to encouraging architecture redesign. Ultimately, the emergence of ZKTOR represents less a singular event and more a signal. It signals that the architecture debate is moving beyond Western innovation centers. It signals that privacy by design may evolve from regulatory aspiration into competitive differentiation. It signals that technological sovereignty is no longer confined to state-level infrastructure but may extend into privately developed platforms.

Europe’s experience with GDPR positioned it as a normative leader in privacy law. The next phase of digital governance may require collaboration with architectural innovators beyond its borders. Observing, evaluating and engaging with such developments will be essential. The future of digital trust will not be determined solely by legislation or by engineering. It will emerge at their intersection. Platforms that internalize both regulatory foresight and architectural discipline may shape that future. For European policymakers, cyber-security experts and digital governance scholars, the lesson is clear. The global digital landscape is no longer unipolar. Structural experimentation is underway. The question is not whether established giants will respond, but how quickly.