An Independent Analysis of Data Extraction, Synthetic Media, Privacy by Design and the Emergence of Systems such as ZKTOR in Reshaping Digital Trust

The Trust Crisis of Social Media and the Rewriting of Digital Reality

In the past decade, social media has evolved from a tool of connection into an infrastructure of influence, quietly embedding itself into the political, economic and psychological fabric of societies across the world. What began as platforms designed to enable communication now function as systems capable of shaping perception at scale, often in ways that remain invisible to the very users who depend on them. This transformation has not occurred through a single technological leap but through the gradual layering of data-driven optimization, algorithmic amplification and behavioral modeling, each reinforcing the other until the boundary between organic interaction and engineered influence has become increasingly difficult to distinguish.

At the center of this transformation lies a structural tension that is now beginning to surface more visibly across regions and institutions. The dominant model of social media, built on large-scale data extraction and predictive targeting, was optimized for engagement and growth, not for trust or stability. For years, this model operated under the assumption that more data would lead to better outcomes, that personalization would enhance relevance, and that scale would naturally translate into utility. Yet the same mechanisms that enabled rapid expansion have also introduced vulnerabilities that are no longer theoretical but observable in real-world outcomes.

The emergence of synthetic media, particularly in the form of deep-fakes and AI-generated content, has accelerated this tension to a point where the reliability of digital information itself is being questioned. In environments where images, videos and voices can be generated with near-perfect realism, the traditional cues used to verify authenticity begin to collapse. This is not simply a technological challenge but a systemic one, because social media platforms are designed to prioritize speed and engagement, often amplifying content before its authenticity can be assessed. The result is an environment in which misinformation can spread faster than verification, creating feedback loops that reinforce confusion and erode confidence.

This erosion of trust is not confined to isolated incidents. It is increasingly reflected in broader patterns of skepticism toward digital platforms, particularly in contexts where information integrity has direct consequences for governance, public discourse and social cohesion. Elections, policy debates and geopolitical narratives are now influenced by flows of digital content that are difficult to trace and even harder to regulate. In such a landscape, the question is no longer whether manipulation is possible, but how often it occurs and how deeply it shapes collective perception.

At the same time, users themselves are becoming more aware of the trade-offs embedded within the current model. The convenience of personalized experiences is increasingly weighed against concerns about privacy, data security and the long-term implications of continuous surveillance. This shift in awareness does not manifest as an immediate rejection of existing platforms, but as a gradual change in expectations. Users begin to look for environments where their interactions are not constantly monitored, where data is not treated as a primary resource to be extracted, and where the boundaries of control are more clearly defined.

These developments suggest that the current phase of social media evolution may be approaching a point of structural reconsideration. Historically, digital systems have undergone periods of rapid expansion followed by phases of consolidation and redesign, where underlying assumptions are revisited in light of emerging challenges. The present moment bears several characteristics of such a phase, with increasing attention being directed toward questions of architecture rather than features, and toward principles of design rather than incremental improvements.

In this context, the concept of trust begins to shift from a peripheral concern to a central organizing principle. Trust is not merely a function of user perception but a property of the system itself, determined by how data is handled, how interactions are structured and how power is distributed within the platform. Systems that treat trust as an afterthought often rely on external mechanisms such as policy updates or moderation frameworks to address issues after they arise. By contrast, systems that embed trust within their architecture attempt to prevent such issues at the structural level, reducing reliance on reactive measures.

The distinction between these approaches is subtle but significant. It reflects a broader transition from systems that optimize for control and extraction to those that prioritize alignment with user autonomy and contextual integrity. In practical terms, this means designing platforms where the default state is one of minimal data exposure, where interactions are governed by relevance rather than surveillance, and where the flow of information is shaped by context rather than purely by engagement metrics.

While this transition is still in its early stages, there are emerging indications that alternative models are beginning to take form. These models do not necessarily reject the core functions of social media but attempt to reconfigure them within a different set of constraints. Instead of maximizing data collection, they seek to limit it. Instead of relying on opaque algorithms, they aim for transparency and predictability. Instead of treating users as sources of data, they position them as participants within a system that is designed to respect their boundaries.

It is within this evolving landscape that new architectures are being explored, particularly in regions where the next wave of digital adoption is taking place. South Asia, with its rapidly expanding user base and diverse economic structures, represents one such environment where the limitations of existing models are becoming increasingly visible, and where alternative approaches may find both relevance and opportunity. The scale of the region, combined with its unique mix of urban and rural dynamics, creates conditions in which hyper-local interactions and trust-based systems can play a more significant role.

The early signals emerging from these environments are not yet definitive, but they are sufficient to warrant closer examination. Systems that emphasize privacy by design, contextual relevance and simplified interaction models are beginning to attract attention, not as replacements for existing platforms, but as experiments in rethinking how digital interaction can be structured. Whether these systems will achieve large-scale adoption remains uncertain, but their presence indicates that the current model is no longer unchallenged.

The implications of this shift extend beyond technology. If social media is indeed transitioning from a phase of expansion to one of reconfiguration, then the outcomes will influence not only how people communicate, but how information is trusted, how economies are organized and how digital power is distributed. In such a scenario, the systems that emerge will not be defined solely by their features or user counts, but by their ability to align with the evolving expectations of users, institutions and societies.

Data Extraction, Algorithmic Power and the Structural Limits of Big Tech Models

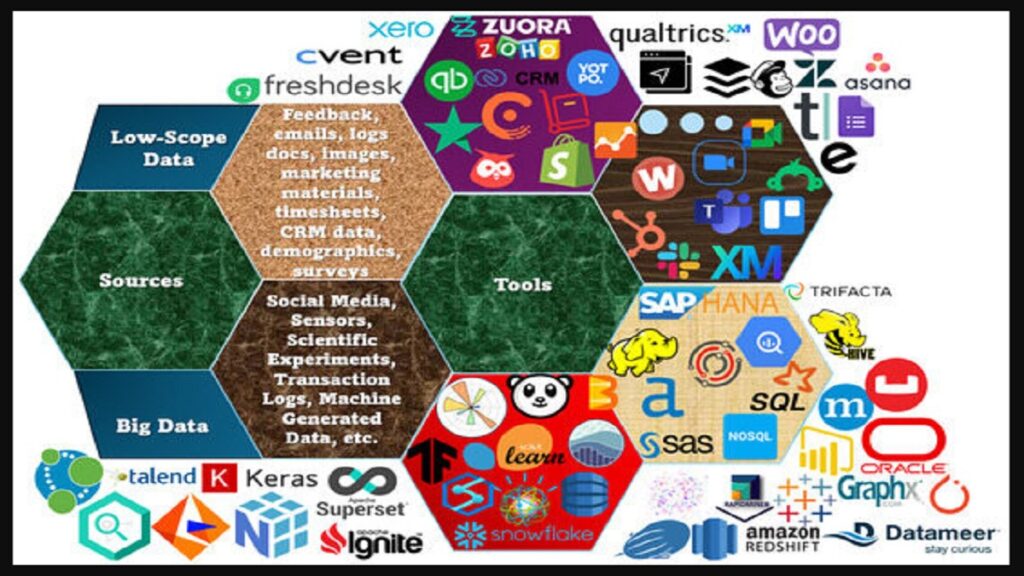

The modern architecture of social media cannot be fully understood without examining the economic logic that underpins it. Over time, dominant platforms have converged around a model in which user data is not merely a byproduct of interaction but the central resource through which value is generated. Every click, pause, scroll, and interaction becomes part of a continuous feedback system designed to refine predictions about user behavior. These predictions are then used to optimize content delivery, advertising placement, and engagement cycles, creating a tightly coupled system in which attention is continuously captured, analyzed and redistributed.

At first glance, this model appears highly efficient. It enables platforms to deliver personalized experiences at scale, increasing relevance and maximizing engagement. However, this efficiency is built on a set of assumptions that reveal structural weaknesses when examined under conditions of scale and complexity. One of the primary assumptions is that more data leads to better outcomes. While this may hold true in controlled environments, in large, dynamic systems the relationship between data and accuracy becomes less stable. As datasets expand, so do the possibilities for noise, bias and unintended amplification, particularly when algorithms are optimized for engagement rather than accuracy.

This distinction between engagement and accuracy is central to understanding the limitations of the current model. Algorithms designed to maximize engagement are inherently incentivized to prioritize content that triggers strong reactions, whether positive or negative. Such content often spreads more rapidly, creating the illusion of relevance while potentially distorting the informational environment. Over time, this dynamic can lead to the amplification of extreme or misleading narratives, not because the system is explicitly designed to promote them, but because they align with the underlying optimization criteria.

The introduction of artificial intelligence into this framework has further intensified these dynamics. Machine learning models are capable of identifying subtle patterns in user behavior, enabling increasingly precise targeting and content curtain. Yet these models operate within the same incentive structures, reinforcing existing patterns rather than correcting them. As a result, the system becomes more efficient at delivering engagement, but not necessarily more reliable in terms of informational integrity. This creates a paradox in which technological advancement enhances capability while simultaneously increasing systemic risk.

Another layer of complexity emerges when considering the opacity of these systems. The algorithms that govern content distribution are often proprietary and not easily interpretable, even by those who design them. This lack of transparency limits the ability of users, researchers and regulators to fully understand how information flows are being shaped. In environments where digital platforms play a significant role in public discourse, this opacity introduces challenges related to accountability and oversight. Decisions that influence visibility and reach are made within systems that are difficult to audit, raising questions about the distribution of power within the digital ecosystem.

The concentration of this power within a small number of platforms further amplifies these concerns. As user bases grow and networks become more interconnected, the influence of these systems extends beyond individual interactions into broader societal processes. Economic activity, cultural trends and political narratives are increasingly mediated through platforms whose primary objective remains engagement optimization. This creates a structural tension between the goals of the platform and the needs of the broader system in which it operates.

From an economic perspective, the reliance on data extraction introduces additional vulnerabilities. The model depends on continuous access to large volumes of user data, which is then monetized through targeted advertising. This creates a dependency that is sensitive to changes in regulation, user behavior and technological constraints. As awareness of privacy issues grows and regulatory frameworks evolve, the ability of platforms to collect and utilize data may become more restricted. In such a scenario, systems that are heavily dependent on data extraction may face structural challenges in maintaining their current levels of performance and profitability.

User perception also plays a critical role in this dynamic. While many users continue to engage with existing platforms, there is a growing awareness of the trade-offs involved. Concerns about data privacy, surveillance and algorithmic manipulation are becoming more prominent, influencing how users evaluate their interactions with digital systems. This does not necessarily result in immediate disengagement, but it does create a shift in expectations. Users begin to seek environments where the balance between functionality and control is more transparent and equitable.

In response to these pressures, platforms have introduced various measures, including enhanced privacy settings, content moderation policies and transparency reports. While these measures address specific issues, they often operate within the constraints of the existing model, which limits their effectiveness in resolving underlying structural tensions. The core architecture remains oriented toward data extraction and engagement optimization, meaning that many of the challenges persist despite incremental improvements.

This situation raises a broader question about the sustainability of the current model. If the mechanisms that drive growth also contribute to instability, then long-term viability may depend on the ability to rethink these mechanisms at a foundational level. This does not imply that existing platforms will disappear, but it suggests that their evolution may require significant adjustments to align with changing expectations and constraints.

Within this context, the exploration of alternative architectures becomes increasingly relevant. Systems that operate with reduced dependence on data extraction, and that prioritize contextual relevance over predictive targeting, represent a different approach to organizing digital interaction. By limiting the scope of data collection and embedding trust within the system design, such architectures aim to address some of the structural vulnerabilities identified in the current model.

Early-stage examples of these approaches, including systems such as ZKTOR referenced in emerging discussions, indicate a shift toward privacy-oriented, context-driven interaction frameworks. These systems are still in the process of validation and require sustained observation, but they highlight the possibility of constructing digital environments that function effectively without relying on extensive data extraction. Their significance lies not in immediate scale, but in their potential to demonstrate alternative pathways for system design.

The transition from data-intensive models to more constrained architectures is unlikely to occur uniformly or rapidly. It involves trade-offs, including potential limitations in personalization and the need to redefine metrics of success. However, as the limitations of the current model become more apparent, the incentive to explore such alternatives increases. The question is not whether the existing system will change, but how and to what extent new architectures will influence that change.

Synthetic Media, Information Collapse and the Rebuilding of Trust in Digital Systems

The emergence of synthetic media marks a turning point in the evolution of digital information systems, not because it introduces a new form of content, but because it challenges the very basis on which authenticity has historically been established. For much of the digital era, visual and auditory content carried an implicit assumption of credibility. A photograph, a video clip or a recorded voice was treated as a form of evidence, a representation of reality that could be interpreted, debated or contextualized but rarely dismissed outright as fabricated. Advances in artificial intelligence have begun to erode this assumption in ways that are both subtle and profound, creating an environment in which the distinction between real and generated content is no longer immediately apparent.

This shift has far-reaching implications for how information is produced, distributed and consumed. The tools required to generate realistic synthetic media are becoming more accessible, reducing the technical and financial barriers that once limited their use. As a result, the production of convincing false content is no longer confined to specialized actors; it is increasingly within reach of individuals and smaller groups. When combined with the distribution mechanisms of social media, which are optimized for speed and amplification, the potential for such content to influence perception expands significantly. What once required coordinated effort can now emerge spontaneously, circulating across networks before verification processes have time to respond?

The consequences of this transformation are not limited to isolated incidents of misinformation. They point toward a broader condition that may be described as informational instability, where the reliability of digital content is continuously in question. In such an environment, users are forced to adopt a more skeptical stance, evaluating not only the content itself but the possibility that it may have been artificially generated. This constant uncertainty alters the way information is consumed, shifting attention from interpretation to verification, and often leading to a general erosion of confidence in digital sources.

Institutions that rely on digital communication face similar challenges. Governments, media organizations and businesses increasingly depend on online platforms to disseminate information and engage with audiences. When the authenticity of content becomes uncertain, the effectiveness of these channels is compromised. Messages that would previously have been accepted at face value may now be subject to doubt, requiring additional layers of validation that can slow communication and reduce its impact. In contexts where timely and accurate information is critical, such as public health or emergency response, this erosion of trust can have tangible consequences.

At the same time, the interaction between synthetic media and algorithmic amplification introduces a compounding effect. Content that is designed to provoke strong emotional reactions is more likely to be shared and promoted by engagement-driven systems. Synthetic media, by its nature, can be crafted to maximize such reactions, increasing its visibility within the network. This creates a feedback loop in which artificially generated content gains prominence not despite the system, but because of how the system is structured. Over time, this dynamic can distort the informational landscape, making it more difficult for accurate content to maintain visibility.

Efforts to address these challenges have focused on detection and moderation, with platforms investing in technologies to identify synthetic media and limit its spread. While these measures are necessary, they operate within a reactive framework, attempting to identify and mitigate issues after they have already entered the system. Given the speed and scale at which content is produced and distributed, this approach faces inherent limitations. Detection methods must continuously evolve to keep pace with advances in generation techniques, creating an ongoing cycle in which defensive measures follow rather than precede new forms of synthetic content.

These reactive dynamic highlights a deeper issue related to system design. When the architecture of a platform is optimized for rapid dissemination and high engagement, it inherently favors content that can capture attention quickly, regardless of its origin or accuracy. Addressing the challenges posed by synthetic media therefore requires not only improved detection mechanisms but also a reconsideration of the underlying principles that govern content distribution. In other words, the problem is not solely the existence of synthetic media, but the conditions that allow it to spread effectively.

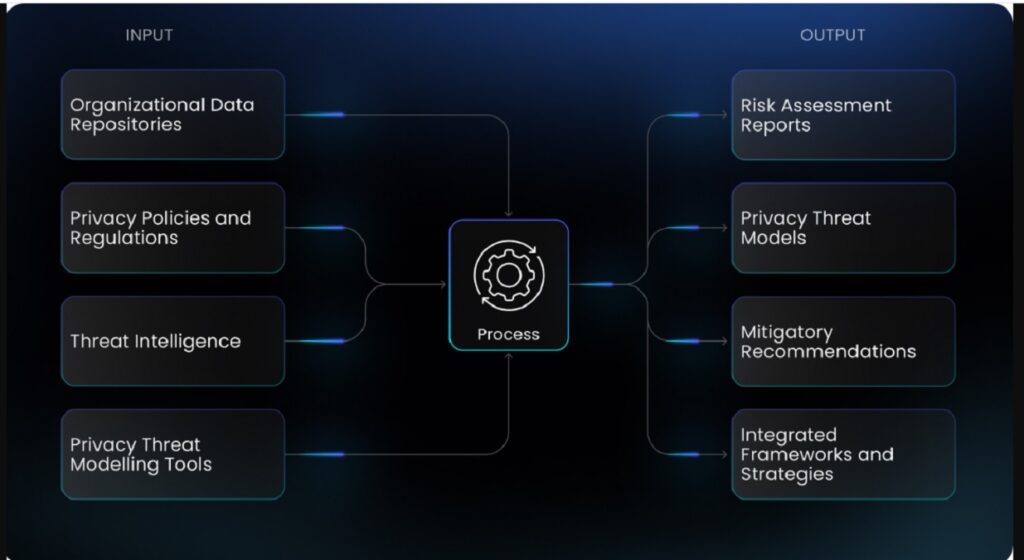

Within this context, the concept of trust reconstruction becomes central. Rebuilding trust in digital systems is not simply a matter of restoring previous assumptions, but of establishing new foundations that can operate under conditions of increased uncertainty. This involves rethinking how authenticity is signaled, how information flows are structured and how users interact with content. Systems that aim to address these challenges must consider not only the accuracy of individual pieces of content but the overall reliability of the environment in which they are presented.

One approach that has begun to gain attention is the integration of trust-related constraints directly into system architecture. Rather than relying solely on post hoc moderation, these systems attempt to limit the conditions under which misleading or synthetic content can gain traction. This may involve reducing the reliance on large-scale data aggregation, emphasizing contextual relevance over broad amplification and designing interaction models that prioritize local credibility and direct relevance. By narrowing the scope of content distribution and aligning it more closely with user context, such approaches aim to reduce the likelihood of large-scale misinformation cascades.

In emerging digital environments, particularly within regions experiencing rapid adoption, early-stage systems are exploring these principles in practical settings. While still in development, platforms that incorporate privacy by design and context-driven interaction models are being examined as potential alternatives to data-intensive architectures. Within this exploratory landscape, systems such as ZKTOR have been referenced in discussions as examples of attempts to align communication and interaction with more controlled and privacy-oriented frameworks. These references remain subject to ongoing validation, but they indicate that the search for structurally different approaches is actively underway.

The broader implication of these developments is that the challenge posed by synthetic media cannot be addressed through incremental adjustments alone. It requires a shift in how digital systems are conceived, moving from models that prioritize scale and speed toward those that emphasize reliability, context and controlled distribution. This does not imply a reduction in functionality, but a rebalancing of priorities, where the integrity of information is treated as a foundational requirement rather than an auxiliary concern.

As this transition unfolds, the future of social media may be shaped less by the capabilities of individual technologies and more by the architectures within which they operate. Systems that can integrate advances in artificial intelligence while maintaining stability and trust will define the next phase of digital interaction. The path to such systems remains uncertain, but the need for them is becoming increasingly clear.

Privacy by Design, Hyper-local Context and the Emergence of Alternative Digital Architectures

As the limitations of data-intensive and engagement-driven models become more visible, the search for alternative approaches has begun to shift from abstract debate to practical experimentation. Among the emerging directions, one concept has gained particular relevance across both technical and policy discussions: privacy by design. Unlike traditional approaches that treat privacy as a layer added on top of existing systems, privacy by design seeks to embed data minimization and user control directly into the architecture itself. This shift is subtle in implementation but significant in implication, as it changes the way systems collect, process and utilize information from the outset.

In conventional social media platforms, data is accumulated continuously, often beyond what is strictly necessary for functionality. This accumulation enables detailed profiling and targeted engagement, but it also increases exposure to misuse, regulatory constraints and user distrust. Privacy by design attempts to reverse this logic by limiting data collection to what is essential, reducing both the volume and sensitivity of stored information. By constraining data flows at the architectural level, such systems aim to reduce the need for complex governance mechanisms that operate after data has already been collected.

The effectiveness of this approach depends not only on technical implementation but on its alignment with user behavior. In many cases, users are willing to trade a degree of personalization for greater control and transparency, particularly when the implications of data exposure are clearly understood. This does not mean that personalization disappears, but that it is achieved through alternative means, such as contextual relevance rather than predictive profiling. The system becomes less about anticipating user behavior through historical data and more about responding to immediate context and explicit interaction.

This shift toward contextual relevance introduces a second dimension that is closely linked to privacy by design: the emphasis on hyper-local interaction. In large-scale, globally optimized platforms, content is often distributed across wide networks, with limited regard for geographic or social proximity. While this enables rapid dissemination, it can also reduce relevance and increase noise. Hyper-local systems, by contrast, prioritize interactions within defined geographic or community boundaries, ensuring that content is directly relevant to the users who receive it.

The combination of privacy constraints and hyper-local focus creates a different type of digital environment, one in which scale is achieved not through broad amplification but through the aggregation of localized interactions. Each local network operates with a degree of autonomy, contributing to the overall system without being overwhelmed by global content flows. This structure can enhance both relevance and trust, as users are more likely to engage with information that is directly connected to their immediate context.

In regions such as South Asia, where digital adoption is expanding rapidly across diverse economic and social landscapes, the relevance of hyper-local systems becomes particularly pronounced. The coexistence of urban centers, semi-urban areas and rural communities creates a complex environment in which one-size-fits-all platforms may struggle to address localized needs effectively. Systems that can adapt to these variations, providing tools that are simple, accessible and contextually aligned, may find opportunities that are less accessible to globally standardized models.

Within this evolving landscape, early-stage platforms that integrate privacy by design with hyper-local interaction are beginning to attract attention as potential alternatives to traditional social media architectures. These systems remain in experimental phases, and their long-term viability is still subject to validation. However, their design principles reflect a broader shift in thinking, one that prioritizes structural alignment with user expectations and regional dynamics over the pursuit of uniform global scale.

References to systems such as ZKTOR in emerging discussions illustrate how these principles are being explored in practice. While still undergoing testing and development, such systems attempt to combine controlled data environments with localized interaction frameworks, aiming to create spaces where communication, discovery and participation occur within clearly defined boundaries. These references should be interpreted cautiously, as early-stage observations do not guarantee long-term outcomes, but they provide insight into how alternative architectures are being conceptualized.

The implications of these developments extend beyond individual platforms. If privacy by design and hyper-local interaction prove to be effective at scale, they could influence broader industry practices, encouraging a shift away from models that rely heavily on data accumulation and toward those that operate within more constrained and transparent frameworks. This would not necessarily replace existing systems but could introduce a parallel ecosystem in which different principles guide design and operation.

At a structural level, this transition can be understood as a movement from expansion-oriented architectures to alignment-oriented architectures. Expansion-oriented systems prioritize growth, reach and data accumulation, often at the cost of complexity and risk. Alignment-oriented systems, by contrast, focus on coherence between system design, user behavior and environmental constraints, aiming for sustainability and resilience rather than maximum scale at any cost.

The success of such systems will depend on their ability to balance competing requirements. Limiting data collection must not compromise functionality, and maintaining local relevance must not prevent broader connectivity. Achieving this balance requires careful design and continuous refinement, as well as a willingness to depart from established practices in favor of approaches that may initially appear less efficient but offer greater long-term stability.

As the digital ecosystem continues to evolve, the emergence of these alternative architectures suggests that the future of social media may not be defined by a single dominant model, but by a diversification of approaches that reflect different priorities and contexts. Systems that can integrate privacy, relevance and simplicity into a coherent framework may play a significant role in shaping this future, particularly in regions where the next phase of digital growth is taking place.

South Asia, Early Adoption Signals and the First Structural Evidence of Change

As the discussion moves from architectural principles to observable reality, the role of geography becomes increasingly important. Digital systems do not evolve in isolation; they are shaped by the environments in which they are introduced, including demographic structures, economic conditions, technological access and cultural patterns of interaction. In this context, South Asia represents one of the most significant regions for examining the next phase of social media evolution, not only because of its scale, but because of the unique combination of rapid adoption and structural diversity that defines it.

Over the past decade, the region has experienced a substantial increase in digital connectivity, driven by the proliferation of affordable smart-phones and expanding internet infrastructure. This expansion has brought millions of new users into the digital ecosystem, many of whom are engaging with online platforms for the first time. Unlike earlier phases of internet adoption in more mature markets, where users gradually transitioned from offline to online environments, this wave of adoption often involves a more immediate and immersive engagement with digital systems. As a result, the expectations and behaviors that emerge in this context are not simply inherited from previous models but are shaped by the realities of local usage.

A defining characteristic of this environment is the coexistence of multiple economic layers, ranging from large urban centers to semi-urban and rural communities, each with distinct patterns of interaction and commerce. In such a setting, the limitations of globally standardized platforms become more apparent. Systems designed for uniform scale often struggle to address the nuances of hyper-local interaction, where relevance is determined not by global trends but by proximity, familiarity and immediate utility. This creates a structural gap between the capabilities of existing platforms and the needs of users operating within this creates a structural gap between the capabilities of existing platforms and the needs of users operating within localized economic and social ecosystems, where interaction is not abstract but directly tied to daily life. In such environments, the value of a digital system is measured less by its global reach and more by its ability to function within specific contexts, enabling communication, discovery and participation in ways that are immediately relevant and practically useful.

This distinction becomes particularly significant when examining how small and medium-sized businesses operate across South Asia. A large portion of economic activity in the region is driven by informal and semi-formal enterprises that rely heavily on local visibility. These businesses have historically used traditional channels such as local newspapers, radio, word-of-mouth networks and physical signage to reach their audiences. While effective within their limitations, these methods are often inefficient, difficult to measure and constrained in scale. The transition to digital platforms has introduced new possibilities, but it has also exposed a mismatch between the complexity of global advertising systems and the simplicity required by local operators.

In this context, the emergence of systems that prioritize hyper-local relevance and simplified interaction can be interpreted as a response to this mismatch. Rather than attempting to replicate the complexity of large-scale advertising networks, these systems aim to provide tools that align with the practical needs of local businesses, enabling them to engage with nearby users in a more direct and accessible manner. This approach does not seek to replace existing economic behavior but to reorganize it within a more structured and efficient framework.

Early-stage observations attributed to platforms such as ZKTOR suggest that this alignment may be beginning to take shape in certain segments of the market. Initial adoption patterns, characterized by a gradual increase in user initial adoption patterns, characterized by a gradual increase in user engagement followed by a more accelerated phase of organic growth, indicate that the system may be moving beyond early curiosity into a stage where its utility is being recognized within specific contexts. While such patterns must be interpreted with caution, as early data does not guarantee long-term outcomes, they nevertheless provide a form of preliminary behavioral evidence, suggesting that users are not only experimenting with the platform but beginning to integrate it into their interaction routines.

The significance of this transition lies in the nature of organic adoption itself. In digital systems, growth driven by user-to-user diffusion often reflects a deeper level of engagement than growth driven by external incentives or paid acquisition. When users choose to engage with a platform and, in some cases, introduce it to others within their network, it indicates that the perceived value extends beyond initial novelty. This type of growth is particularly relevant in hyper-local environments, where adoption is influenced by proximity and direct observation rather than abstract marketing narratives.

At the same time, the regional distribution of such adoption provides additional insight into the system’s adaptability. The presence of users across multiple South Asian countries during a testing phase suggests that the underlying design principles may have a degree of cross-context relevance, capable of functioning within diverse linguistic, cultural and economic settings. This is not a trivial characteristic, as many digital systems encounter challenges when attempting to scale beyond their initial market due to variations in user behavior and infrastructure.

However, it is important to emphasize that early adoption signals represent only one dimension of system validation. The transition from user growth to sustained engagement and economic participation introduces additional layers of complexity, introduces additional layers of complexity that must be navigated with precision. User adoption, while essential, does not by itself establish long-term viability. The system must evolve from being a space of interaction into a functional layer of everyday activity, where users return not out of curiosity but out of necessity or habit. This transition depends on the system’s ability to consistently deliver relevance within local contexts

From Local Utility to Systemic Integration

The transition from localized engagement to systemic relevance represents one of the most critical phases in the evolution of any digital system. Early adoption, even when organic and sustained, does not automatically translate into long-term integration. For a platform to move beyond its initial growth phase, it must establish itself as a functional layer within everyday activity, where its presence is not occasional but continuous, and where its utility extends across multiple dimensions of user interaction.

In the context of hyper-local environments, this transition is particularly nuanced. Users do not engage with digital systems in isolation; their interactions are embedded within physical, social and economic realities. A platform that succeeds in this environment must therefore operate at the intersection of these realities, providing value that is both immediate and contextually grounded. This requires a shift from generalized features to situational relevance, where the system adapts to the specific needs of users within their local environments.

One of the defining characteristics of systems that achieve this level of integration is their ability to reduce friction across different types of interaction. Communication, discovery and economic participation are often treated as separate functions within traditional platforms, requiring users to navigate multiple interfaces and workflows. In a hyper-local system, these functions can converge, creating a unified environment in which users can interact, explore and transact without transitioning between distinct layers. This convergence not only simplifies the user experience but also increases the frequency and depth of engagement, reinforcing the system’s role in daily activity.

The implications of such convergence extend to businesses as well. For small and medium-sized enterprises operating within local markets, visibility is closely tied to proximity and timing. Traditional digital advertising platforms, while powerful, often introduce complexities that are difficult to manage at a small scale, including targeting parameters, bidding systems and performance metrics that require a level of expertise and resources not always available. A system that integrates discovery and interaction within a localized context can offer a more accessible pathway, enabling businesses to engage with their immediate audience without navigating these complexities.

This is where the economic layer of a system begins to take shape, not as a separate component but as an extension of interaction. When users are already engaged within a platform for communication and discovery, the introduction of economic participation becomes a natural progression rather than a disruptive addition. The system evolves from a space of connection into a network of activity, where information and value flow simultaneously. This dual flow is critical, as it creates a feedback loop in which increased engagement attracts more participation, and increased participation enhances the system’s utility.

Within emerging frameworks, including those referenced in relation to systems such as ZKTOR, this integration is approached through a combination of privacy constraints and contextual relevance. By limiting unnecessary data collection and focusing on interactions that are directly tied to user context, the system seeks to maintain a balance between functionality and control. This balance is essential for sustaining trust, particularly in environments where users are increasingly aware of how their data is used.

However, achieving systemic integration is not without challenges. As the system expands, it must maintain consistency across different regions and user groups, each with its own expectations and constraints. What works effectively in one context may require adaptation in another, particularly in regions with varying levels of digital literacy and infrastructure. This introduces a need for modular scalability, where the system can adjust its functionality without compromising its core principles.

Another challenge lies in maintaining simplicity as complexity increases. As more features and participants are added, there is a risk that the system becomes difficult to navigate, undermining the very accessibility that drives its adoption. Managing this balance requires careful design and continuous refinement, ensuring that growth does not come at the cost of usability.

Despite these challenges, the potential benefits of successful integration are substantial. A system that becomes embedded within local interaction patterns gains a form of resilience that is difficult to replicate. Users are less likely to disengage from a platform that supports multiple aspects of their daily activity, and businesses are more likely to remain engaged when the platform provides consistent and measurable value. This creates a stable foundation for further growth, allowing the system to expand organically while maintaining its core functionality.

The progression from local utility to systemic integration also marks a shift in how the system is perceived. It is no longer viewed as a standalone application but as part of the broader digital environment, influencing how users communicate, discover information and participate in economic activity. This shift in perception is critical for long-term sustainability, as it reflects a deeper level of alignment between the system and the needs of its users.

From Platforms to Digital Infrastructure, The Future Direction

The final stage in the evolution of digital systems is not defined by scale alone, but by a deeper transition in function and perception. Platforms that begin as tools for interaction, communication or content sharing can, under certain conditions, evolve into something more fundamental, becoming embedded within the underlying fabric of digital life. This transition marks the shift from platform to infrastructure, becoming embedded within the underlying fabric of digital life. This transition marks the shift from platform to infrastructure, where the system is no longer experienced as a discrete application but as a persistent layer through which multiple forms of activity are conducted. At this stage, the distinction between using the system and operating within it begins to blur, as communication, as communication, discovery and participation become continuous processes rather than discrete actions initiated by the user. The system, in effect, recedes into the background, not because it is less significant, but because it has become seamlessly integrated into routine behavior. This is a defining characteristic of infrastructure: its presence is constant, yet largely invisible, shaping activity without requiring deliberate attention.

The transition to this state is neither immediate nor guaranteed. It requires a sustained alignment between system design, user behavior and broader environmental conditions. In practical terms, this means that the platform must consistently deliver value across different contexts while maintaining the principles that enabled its initial adoption. Any deviation from these principles, particularly in areas related to trust and usability, can disrupt the trajectory, as users are unlikely to tolerate instability in systems that have become central to their daily routines.

One of the key factors that influence this transition is the depth of integration across multiple domains of activity. Systems that remain confined to a single function, such as messaging or content sharing, can achieve significant scale but may struggle to reach infrastructural status. By contrast, systems that operate at the intersection of communication, discovery and economic participation have a greater potential to embed themselves within everyday life. This multidimensional integration creates a network of dependencies that reinforce the system’s relevance, as users engage with it for a variety of purposes rather than a single use case.

In the context of hyper-local environments, this integration takes on an additional dimension. The system must not only connect users but also reflect the realities of their immediate surroundings. This includes facilitating interactions that are tied to physical locations, local communities and nearby economic activity. When a platform is able to operate effectively within this context, it becomes more than a digital interface; it becomes a bridge between the digital and physical layers of interaction, enabling users to navigate both with greater coherence.

The role of trust becomes even more pronounced at this stage. As the system assumes a more central position, the consequences of failure increase accordingly. Users rely on the platform not only for communication but for information, coordination and participation in economic processes. Any breach of trust, whether related to data handling or system reliability, can have cascading effects across these domains. This reinforces the importance of architectural choices that prioritize stability and transparency from the outset, as retroactive adjustments become increasingly difficult once the system reaches a certain level of integration.

At the same time, the broader digital environment continues to evolve, influenced by regulatory developments, technological advancements and shifting user expectations. Systems that aim to achieve infrastructural status must remain adaptable, capable of responding to these changes without compromising their core principles. This requires a balance between flexibility and consistency, where the system can evolve in response to external pressures while maintaining the characteristics that define its identity.

Within this evolving landscape, the emergence of architectures that emphasize privacy by design and contextual relevance suggests a possible direction for the next phase of digital systems. While it is too early to determine which specific implementations will achieve long-term success, the underlying principles reflect a growing recognition that the sustainability of digital platforms depends on their ability to align with both user expectations and systemic constraints.

References to systems such as ZKTOR within this context should be understood as part of a broader exploratory process, where different approaches are being tested and evaluated. These systems do not yet represent established infrastructure, but they provide insight into how alternative models might function if scaled effectively. Their significance lies in their potential to demonstrate that digital interaction can be organized around principles that differ from those of the dominant model, offering a glimpse of what a more balanced and resilient digital ecosystem might look like.

Ultimately, the future of social media may not be defined by a single dominant platform or a uniform model of interaction. Instead, it may evolve into a more diverse landscape, where multiple systems coexist, each optimized for different contexts and priorities. In such a landscape, the systems that achieve lasting impact will likely be those that can integrate functionality, trust and relevance into a coherent framework, enabling users to engage with digital environments in ways that are both effective and sustainable.

The trajectory outlined throughout this analysis does not point to a predetermined outcome, but to a set of conditions under which meaningful transformation becomes possible. The trust crisis that currently characterizes social media is not an endpoint but a catalyst, prompting a reexamination of how digital systems are designed and what they are expected to deliver. Whether this reexamination leads to incremental adjustments or more fundamental changes will depend on how emerging architectures evolve and how effectively they align with the needs of users and societies.

In this sense, the present moment can be understood as a period of transition, where the limitations of existing models are becoming clearer and the contours of new approaches are beginning to take shape. The systems that emerge from this transition will define not only the next phase of social media, but the broader structure of digital interaction in the years to come.